Our paper 'DreamSpace: Dreaming Your Room Space with Text-Driven Panoramic Texture Propagation' accepted by IEEE VR 2024.

Our paper 'DreamSpace: Dreaming Your Room Space with Text-Driven Panoramic Texture Propagation' accepted by IEEE VR 2024.

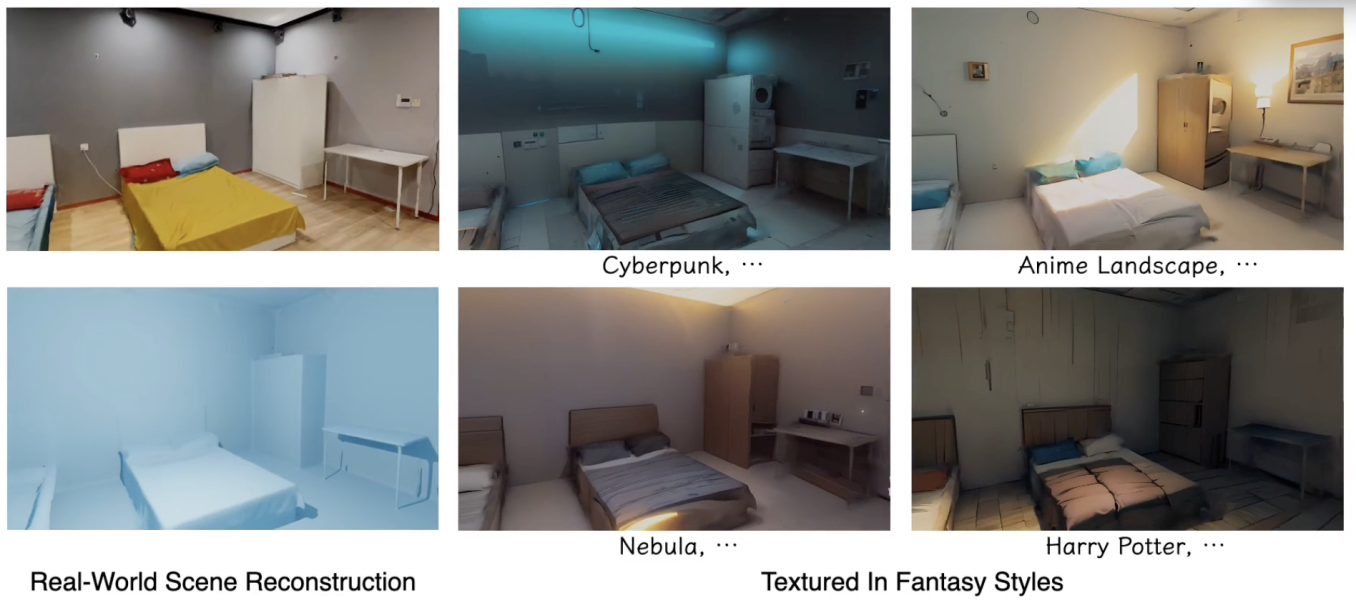

DreamSpace: Dreaming Your Room Space with Text-Driven Panoramic Texture Propagation

Diffusion-based methods have achieved prominent success in generating 2D media. However, accomplishing similar proficiencies for scene-level mesh texturing in 3D spatial applications, e.g., XR/VR, remains constrained, primarily due to the intricate nature of 3D geometry and the necessity for immersive free-viewpoint rendering. In this paper, we propose a novel indoor scene texturing framework, which delivers text-driven texture generation with enchanting details and authentic spatial coherence. The key insight is to first imagine a stylized 360° panoramic texture from the central viewpoint of the scene, and then propagate it to the rest areas with inpainting and imitating techniques. To ensure meaningful and aligned textures to the scene, we develop a novel coarse-to-fine panoramic texture generation approach with dual texture alignment, which both considers the geometry and texture cues of the captured scenes. To survive from cluttered geometries during texture propagation, we design a separated strategy, which conducts texture inpainting in confidential regions and then learns an implicit imitating network to synthesize textures in occluded and tiny structural areas. Extensive experiments and the immersive VR application on real-world indoor scenes demonstrate the high quality of the generated textures and the engaging experience on VR headsets.

PDF: https://arxiv.org/abs/2310.13119

Code: https://github.com/ybbbbt/dreamspace

Video: https://www.youtube.com/watch?v=Ni5kXdj7cfw